Why voice?

As telco clients, Yoigo users are used to talking to their company on the phone. Many still require telephonic assistance to complete their purchases. Most times, they simply call to verify information that is available on the web or because they are looking for more personal advice. Unfortunately, call centers are usually busy. This cases long waits for our users and high costs for the company. We wanted to explore voice user interfaces to assist our web users and personalise their experience.

Let’s start… by the beginning

We defined the use cases that best fitted this channel based on the following criteria:

- The interaction already exists as a human-to-human conversation and it is relatively brief, with short ramifications.

- Users might have to navigate a lot to gather complete this interaction on the web.

- Users might want to multitask while completing this interaction.

Research. Looking into real conversations

Before writing new scripts and dialog flows, we decided to listen and transcribe the real life conversations that our users where already having with human-agents on the telephone. We learned a lot about how agents recommended products based on the clients input and, also, about the questions most frequently asked by our clients. We also “faked” some of this conversations ourselves, in order to empathise with the user and identify the parts of the conversation that a IA might have more difficulties to understand. Once we were able to look at all these transcriptions together, some patterns started to emerge.

Time to build the real thing

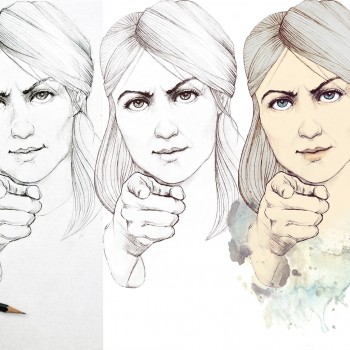

Finding de right voice

In order to start talking, we also needed to define our own voice. Our brand guidelines spoke about Yoigo’s witty and easy-going personality. But we needed some more detail in order to hear how it talks. We created a persona based on this characteristics and added some language guidelines and examples about what and what not to say.

User journeys and conversational design

Based on our analysis, we started to write our dialogs and training phrases. We tried to keep this interactions short and simple, identifying possible crossroads and questions that might lead from one flow to another. We also prepared different dialogs scripts for visual or spoken interfaces, Google Assistant or Alexa.

User testing and iterations

Our first revealed that users enjoyed the assistant… too much. Our first MVP was limited to only three kind of interactions, but users demanded much more. We decided to add a bunch of short question-answer interactions and a general fallback options that relied on the “help” content within Yoigo website to provide more context and general support for users.